Rolling with the Punches: The European Commission’s Approach to the Sharing of Search Data under Article 6(11) DMA

April 29, 2026

ABSTRACT

Article 6(11) of the Digital Markets Act obliges Google, as the sole designated gatekeeper for online search engine services, to share ranking, query, click and view data with third-party undertakings on fair, reasonable and non-discriminatory terms, whilst ensuring that any personal data is duly anonymised. Google’s successive compliance efforts fell short of the European Commission’s enforcement expectations, prompting the latter to trigger specification proceedings under Article 8(2) DMA and publish Implementation Measures addressing five core pillars: eligibility, data scope, anonymisation, FRAND pricing and dataset acquisition.

This article critically examines each pillar in turn, arguing that whilst the EC’s shift from frequency thresholding to entity and length-based thresholding represents a meaningful technical improvement, residual vulnerabilities remain unaddressed. The extension of the provision’s scope to AI chatbots, the incremental cost-based pricing methodology and the auditing requirements for dataset access each raise further questions that the Implementation Measures leave, at best, partially resolved.

Introduction

Article 6(11) DMA1 establishes a general obligation on all designated gatekeepers for online search engine providers (aka, Google) to provide access to ranking, query, click and view data to third-party undertakings providing online search engines. Upon third party request, the gatekeeper must provide access on fair, reasonable and non-discriminatory (FRAND) terms to the data. In parallel, it must ensure that any sharing of data constituting personal data is duly anonymised. The obligations resemble some of the remedies finally imposed by U.S. District Judge Mehta in September 2025, which ordered Google to share its proprietary search data with competitors.2 High expectations have been placed on Google to deliver on its regulatory and judicial promises.

The designated gatekeeper has not met those expectations as the antitrust and regulatory enforcers would have expected. In the particular case of the application of Article 6(11) DMA, the European Commission (EC) believes that Google’s efforts have not met the effective enforcement threshold. Therefore, it decided to trigger a specification proceeding as provided under Article 8(2) DMA to nail down the implementation measures that it expects the gatekeeper to comply with.3

Three months later and abiding by the time-constrained procedure of specification proceedings, the EC has issued the Implementation Measures it expects Google to adopt and has opened a public consultation that will close on 1 May.4 In the spirit of providing useful feedback that can nourish the broader debate, here are my main thoughts on the EC’s proposed Implementation Measures as they currently stand.

The implementation of Article 6(11) DMA: Major concerns and stakeholder backlash

Until now, originally designated gatekeepers have published three versions of their compliance reports for 2024,5 20256 and 2026.7 Google is the only designated gatekeeper for an online search engine as a core platform service (CPS). Thus, Google is the only gatekeeper that must implement Article 6(11) DMA. The gatekeeper’s technical implementation of the provision has evolved over time and revolved around three main tenets that have suffered deep transformation during the last three years: i) the scope of data that would be disclosed upon the request of third parties; ii) the anonymisation techniques used to comply with data protection requirements; and iii) the terms that would apply upon the data access, notably with regard to pricing and access conditions.

In 2024, Google announced that it had developed a new European Search Dataset Licensing Program where third-party online search engines could obtain Google Search data on more than one billion distinct queries across all 30 EEA countries.8 The scope of data covered by the provision included several data fields, such as query strings, country, device type, result, average rank, impression accounts and click counts, as disclosed by Google in its first compliance workshop.9 To comply with data protection requirements, the Dataset was anonymised based on frequency thresholding, i.e., a technique used to protect personal data by hiding or removing information that is too unique or rare. Frequency thresholding works by setting a minimum number of users required for data to be displayed in a report. As prompted by Google on the compliance workshop, all queries entered by signed-in users 30 times globally over the last 13 months of the relevant quarter would be included in the Dataset. Google would not provide data for results viewed by fewer than 5 signed-in users in a given country and per device type.

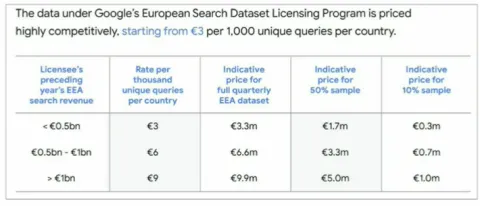

The most astounding development on Google’s side materialised with regard to pricing. The gatekeeper looked at the pricing of broadly similar offerings in the market and reached the conclusion that it would charge 3€ per 1,000 distinct unique queries per country. To put things in perspective, Google processes over 13.7 to 16 billion search queries daily, with 15% of these daily queries being brand new (2 billion and four hundred million of those queries are unique to the search engine). Bearing in mind the comparison, Google would charge per 1,000 distinct unique queries for each quarter and provide a range of options to third-party online search engines, where access to the Dataset could be provided partially (from 10% to 50%) or totally, depending on the competitor’s EEA search revenue in the preceding year. See below the pricing conditions introduced by Google in 2024:

Figure 1. A screenshot of Google's presentation in the 2024 compliance workshop.

The gatekeeper introduced minor tweaks to its approach towards complying with Article 6(11) DMA in 2025. It introduced a new pricing tier (by offering a 50% cheaper pricing tier for third parties with revenues below €0.05 bn), further optionality for potential recipients on the data scope (by introducing the option to receive a one-time 5% fractional dataset, before deciding whether to purchase the full dataset or fractional 10% or 50% options) and made technical changes to increase the volume of the Dataset in a privacy-safe manner (page 187 of Alphabet’s 2025 compliance report).

On Alphabet’s second compliance workshop held in July 2025,10 third parties that would benefit from the opportunities presented by the provision set forth that the anonymisation techniques applied by Google to the Dataset entailed that nearly all data that could be accessed would be rendered useless. DuckDuckGo argued that more than 99% of distinct queries (which are actually where online search engines can make some money) were removed, whereas the Czech search engine Seznam estimated that 2% of unique data that could be extracted from the Dataset would be termed as productive. Google responded to those concerns by arguing that it had looked for additional ways to recover queries that would not meet the thresholding requirements (e.g., by extracting easily identifiable misspelled words) and that it was unaware of different methods that would ensure the level of anonymisation of data required by the DMA whilst preserving data utility. By this token, it set forth that frequency thresholding is a state-of-the-art method that stays well below (and, thus, to the benefit of third parties) the high thresholds that other companies apply. Additionally, Google’s representatives represented its Dataset not as “utility test” and encouraged third parties to propose alternative anonymisation techniques that would render data useful and preserve data confidentiality in the way provided by Article 6(11) DMA. Following the harsh comments, the gatekeeper made no further amendments to its compliance approach with Article 6(11) DMA on its 2026 compliance report (page 200).

Against this framework, the EC triggered its specification proceedings to assist Google in complying with the search data sharing obligations under the DMA, and here’s where we stand as of today. The enforcer implicitly interprets that the gatekeeper’s implementation of the provision is not enough to satisfy the expected results that it should deliver, i.e., a more open and contestable search engine market.

The implementation measures proposed by the European Commission

Article 6(11) DMA is quite a strange provision within the regulation because its effectiveness depends on many distinct tenets involving not only competition-related aspects, but also data protection requirements. On the former aspect, the provision requires that the designated gatekeeper must provide access to the data on FRAND terms, despite the fact that one cannot easily guess what FRAND will mean in the DMA’s context.11 It is not particularly clear what the legislator tried to achieve by introducing the FRAND requirement, aside from ensuring that Google negotiates in good faith with third party online search engines when providing access to its search data.

On the latter, Article 6(11) DMA reads that “any (…) query, click and view data that constitutes personal data shall be anonymised”. In this same direction, Recital 61 establishes that “a gatekeeper should ensure the protection of the personal data of end users, including against possible re-identification risks, by appropriate means, such as anonymisation of such personal data, without substantially degrading the quality or usefulness of the data. The relevant data is anonymised if personal data is irreversibly altered in such a way that information does not relate to an identified or identifiable natural person or where personal data is rendered anonymous in such a manner that the data subject is not or is no longer identifiable”. In other words, the EU legislator wishes to compel Google to solve the privacy-utility trade-off when it comes to search engine data. There is a fundamental tension in data science between protecting individual privacy (by minimising sensitive data disclosure) and maintaining high data utility (by ensuring that data remains accurate and useful for analysis). At the outset, Google must strike the correct balance and find an acceptable level of anonymisation that provides data utility to third parties. One must stress that this is a broader challenge that data scientists have been struggling with during the last 20 years. Recent research confirms a no-free-lunch scenario where it is impossible to simultaneously achieve negligible privacy leakage and maximum utility.12 However, the DMA engages the gatekeeper in a tug-of-war-like situation where data protection standards must be upheld to the highest point possible, whereas it must choose the anonymisation technique that preserves the most quality and usefulness, as set out in para 180 of the draft Joint Guidelines on the interplay between the DMA and the GDPR.13

Bearing in mind both main tenets, the Implementation Measures can be broken down into five main aspects: i) the eligibility of the parties that can be considered as a third-party undertaking providing an online search engine; ii) the scope of data provided and the conditions for its sharing; iii) the anonymisation techniques to be used; iv) FRAND pricing; and v) the process for the acquisition of the Search Dataset. The FRAND mandate permeates all five elements, but the EC’s focus is clearly directed at setting adequate pricing benchmarks that account for the value of Google’s efforts in complying with Article 6(11) DMA.

Eligibility: To AI or not to AI

Without a doubt, the search engine experience has suffered a profound transformation in the last couple of years due to the incursion of chatbots. In Q1 2026, roughly 4,3% of the search engine market has been powered by AI, and by the end of 2027, projections stand at more than 10% of the search engine market being driven by AI technologies.14 The growth can be attributed to disruptors (and prone to dominance) OpenAI and Perplexity, that account for 2,1% and 1,4% of total search queries performed globally, as well as to Google’s own AI-powered tool AI Overviews that appears on over 50% of search queries.15

AI search platforms were built with direct-answer architecture from the start. The user queries the chatbot through a question and it then provides an answer that may or may not include direct links to the content in which the answer is based. For instance, ChatGPT is built as a conversational assistant that uses search as a secondary feature, whereas Perplexity is built as a search engine with citations. The growing interaction of users with chatbots has made zero-click rates peak during the last couple of years. A zero-click search occurs when a user’s query is answered directly on the search engine, eliminating the need to click any links. Five years ago, approximately 25% of Google searches ended without a click. With the introduction of featured snippets and knowledge panels, zero-click rates started to increase. In this same vein, AI Overviews has taken zero-click searches to a whole other level, peaking at 65% overall. In other words, for every 1,000 Google searches, only 360 clicks go to the open web, i.e., the websites where publishers and advertisers do their business (the money side of the market).

Zero-click behaviour is even more powerful in the chatbot context, with rates ranging from 82% in the case of ChatGPT Search to 93% for Perplexity. That is to say, even when users are volunteered the links to access the open web to access information directly (e.g., through Perplexity), they tend to ignore them in favour of relying on one single answer. Despite the low engagement triggered by them, conversational chatbots compete directly with search engines as an alternative source of information, especially for consumers who interact more frequently with them.16 Bearing in mind the trend toward zero-click searches, click data will be less common (and, thus, more valuable so that competitors can drive engagement), and the bulk of the data to be shared by the gatekeeper will include ranking, query and view data.

The EC’s Implementation Measures seek to interpret broadly the concept of third-party undertakings providing online search engines amid the AI revolution. In a brief explanation of its underlying rationale, the EC decides to incorporate undertakings providing AI chatbots with online search engine functionalities into the scope of Article 6(11) DMA (para 2 of the Implementation Measures), to the extent that they meet the definition set out in Article 2(6) DMA.

The definition refers back to the concept embedded in Article 2(5) of the P2B Regulation17 (which might also be problematic in itself given that the incoming Digital Omnibus proposes to repeal the P2B Regulation18): “a digital service that allows users to input queries in order to perform searches of, in principle, all websites, or all websites in a particular language, on the basis of a query on any subject in the form of a keyword, voice request, phrase or other input, and returns results in any format in which information related to the requested content can be found” (emphasis added). The EC considers that AI chatbots catering to search engine functionality can be automatically considered as search engines in terms of the P2B Regulation. In my own view, those services do not correspond automatically with the definition, to the extent that many AI chatbots do not allow users to input queries for the particular purpose of performing searches of all websites. Even if some AI chatbots display similar search engine functionality, the way in which they display such information to the user is particularly distinct. Even though both services may cater to the same need in an interchangeable manner, it seems that the EC’s Implementation Measures could go a bit further in explaining why AI chatbots should be included under the scope of the provision.

Thus, one must consider how AI chatbots might benefit from the application of Article 6(11) and how much those results might align with the intended objective set out by the EU legislator. In line with Recital 61, the value of online search engines lies in data-driven network effects, due to the data advantages that the incumbent derives to its respective business users and end users as the total number of such users increases. As set out by Schaefer and Sapi,19 more comprehensive data about the users improves the efficiency of the search engine by reducing the number of user interactions required to achieve a given quality for a prediction task. Because data is not simply an input but rather a technology shift, data has the potential to confer a significant competitive advantage and to act as a barrier to entry. Article 6(11) DMA addresses (and wishes to eliminate) this barrier to entry for competitors in the search engine market that cater to this functionality, that potentially can contest the designated gatekeeper’s position in the market. In economic terms, sharing search data with AI chatbots might super-power them and further expand the gap with traditional search engine competitors, to the extent that data network effects do not manifest in the same fashion in the AI context as they do in traditional platforms.20

Expanding the provision’s scope to AI chatbots with online search engine functionality may, in the end, promote the replacement of the designated gatekeeper with a distinct technology that we might know less about, especially with regards to its economic functioning. By this token, I encourage the EC to flesh out further its reasoning when it expanded the scope of the provision to AI chatbots, given that it may cause more uncertainty than the predictability that is sought by the specification proceedings.

Data scope and conditions of sharing: the principle of parity

The EC’s Implementation Measures interpret the non-discrimination tenet of FRAND conditions of access to search data by introducing a new legal standard into Article 6(11) DMA. As set out by the EC in the case summary,21 the principle of parity guides the scope of the search data that Google must provide. In application of the principle, Google must: i) share all query, view, click and ranking data which it collects for the purpose of optimising its search services with third parties; ii) share search session data on par with the data which it itself collects and users; and iii) share Search Data with third parties on par with Alphabet’s own frequency of access to the same data (paras 3, 12 and 15 of the Implementation Measures). Instead of the principle of equivalence of input that the EC fleshed out in its specification proceedings surrounding Apple’s compliance with Article 6(7) DMA,22 the EC’s Implementation Measures insert the principle into the law and narrow down the conditions of access that the gatekeeper can negotiate with third parties.

In practice, that means that the scope of data to be disclosed is much broader than the gatekeeper offered in its compliance report, since Google will have to share comprehensive data on query, click, view and ranking data. For instance, the Implementation Measures compel the gatekeeper to share any query input entered by a user into Google Search on any access point, any modifications made by end users and Alphabet to this initial query and any other metadata about the user and query (para 4) or any data on user interaction with search engine results pages as displayed on the user’s device, including the timing, order and duration of a click or its absence, in the form of hovering, scrolling, swipes and expansion of an URL/block (para 9).

In terms of the conditions of data sharing, the gatekeeper’s competitors are also placed on par with Google, insofar as they will use the same method that Google uses itself internally for sharing the Search Data (para 16). It will be instrumentalised via an API that enables third parties to access only new data, rather than the complete updated dataset every time they use the API (para 17). In other words, Google will offer the Search Data via a negotiation to its competitors for a minimum of five years on an ongoing basis, and not with respect to legacy data that it has been generating during the latest years (para 18). Competition and contestability will, therefore, follow an incremental and ever-growing pace, rather than attribute an immediate competitive advantage to competitors, given that they will not be able to access the bulk of data by which Google has built its position in the search engine market.

Anonymisation techniques: from frequency thresholding to entity and length-based thresholding

The EC’s approach towards the anonymisation techniques that Google must deploy to ensure the masking of personal data involves technical and contractual measures. The draft Joint Guidelines on the interplay between the GDPR and the DMA already recognised that anonymisation should be achieved by appropriate technical measures for alteration of the data, complemented by organisational, administrative and contractual measures to mitigate residual likelihood of identification (para 181 of the draft Joint Guidelines).

On the side of the technical measures, Google is compelled to share the Search Data daily and at record-level. Each input record shall contain a query text with metadata and shall meet the technical measures to be included in the Search Data (para 20 of the Implementation Measures). Stemming from the initial sharing, the EC Implementation Measures propose a five-step pipeline. First, the gatekeeper must remove direct identifiers of the search data and other types of identifying data, such as precise timestamps of the search (attribute suppression) (para 21). Second, the gatekeeper must break each search into individual pieces (entities). Stemming from this setting apart of words, the gatekeeper must provide a weekly-updated whitelist of query entities appearing for more than 50 unique signed-in users over 13 months (allowlist creation through entity-based thresholding). When the search data contains entities that are common (i.e., more than 50 people have used it in the last 13 months), it stays on the dataset, but the entire record will be thrown away in case it is a rare combination (para 22). Third, the gatekeeper must apply a second type of thresholding based on the length of the search because long searches are statistically more likely to be unique and identifying. Every week, the system will calculate a maximum character limit for each language and any search longer than what 95% of people typically type is automatically blocked (para 23). Fourth, if a search passes the first three steps, the system then blurs the background information about the user, such as their location and device. The system checks if at least 50 people share the same location and device type. If there are not, the system will zoom out and change a specific location to a broader location until the user is part of a group of at least 50 identical-looking users, e.g., scaling from Downtown Madrid to Madrid City, then to Spain and finally to Europe, if necessary (metadata generalisation, paras 28-31). And finally, to help other third parties providing online search engines understand how users behave without actually tracking the users, related searches are grouped into mini sessions, e.g., when a user clicks a ‘did you mean…’ suggestion presented by Google (mini-sessionation, paras 32-34).

As you’ll already guess, the EC’s Implementation Measures move away from a traditional single-gate approach towards a complex filtering system that prioritises entity frequency and length-based thresholding. Through the frequency-based threshold that Google had traditionally proposed, if the entire query string did not meet the global frequency, i.e., more than 30 global signed-in users over 13 months, then it was discarded from the Dataset. In the EC’s Implementation Measures, the EC sets out a much more refined approach by setting out a filtering pipeline where a query must survive multiple checks before being included in the Search Dataset. For example, with Google’s proposed implementation of Article 6(11) DMA, it required the exact string to be popular more than 30 times, e.g., all the words in ‘how to fix a bike’. The EC’s approach focuses on the components of the string of words and measures whether they are individually popular, i.e., ‘how’, ‘to’, ‘fix’, ‘a’ and ‘bike’ are measured against the >50 threshold individually. Requiring that at least 50 signed-in users share the same metadata meaningfully reduces the precision of re-identification attacks based on rare metadata combinations. By doing so, the query is safer to share even if that specific combination is rare.

There may also be some inherent technical flaws in the EC’s approach. For instance, the Dataset might be vulnerable to composition attacks. If all 50 users sharing metadata also share a sensitive query topic (e.g., a rare medical condition), the group may be anonymous, but completely non-private.23 In this same sense, if the beneficiaries receive the Dataset and potentially have access to other data sources, the anonymisation techniques can be completely ineffective in reality to the extent that an economic player with substantial auxiliary data could plausibly exploit this.24

Let’s go, however, to the end result. Will the EC’s Implementation Measures ensure that third-party undertakings providing online search engines can access substantial and useful data for contesting the gatekeeper’s position in the market? The answer is nuanced because it depends on the query. For example, if we consider the query ‘best cardiologist Amsterdam 2024’, under pure frequency thresholding proposed by Google on its compliance reports, this exact string might have been issued by fewer than 30 users, causing the whole record to be supressed. Under the EC approach, the entities ‘best’, ‘cardiologist’, ‘Amsterdam’, ‘2024’ are each checked independently against the allowlist. Each of these words individually appears in thousands of queries and their length is short. Both thresholds are passed, and the record is included, even though the exact query string is rare. In these sets of cases, the EC’s approach is more inclusive, because it disaggregates the query and checks components rather than the whole string, allowing many queries that are lexically unique but semantically common to pass through.

Notwithstanding, let’s take a different query, such as ‘flu symptoms’. Google’s proposed implementation would entail that this exact string is issued by millions of users, and it would surpass any threshold. The same would apply to the EC’s approach. But now let’s consider ‘john smith flu symptoms’. Under frequency-thresholding, the query might still meet the threshold if many users happened to search this combination. On the contrary, abiding by the EC’s Implementation Measures, ‘john smith’ is detected as a name entity, which will fail to surpass the allowlist threshold (because fewer than 50 users issued queries containing ‘john smith’), and the entire record would be suppressed. Therefore, the effect of the EC’s Implementation Measures on the Dataset’s size is ambiguous without empirical data, because there are two opposing forces moving in different directions. The EC’s entity-based approach expands coverage by allowing lexically rare but semantically common queries through, against the experience of the suppressed under-frequency threshold queries that result from Google’s initial technical implementation of Article 6(11) DMA. All in all, the EC’s approach is better targeted because it is more permissive with respect to common concepts in rare combinations, and more restrictive with regard to the presence of personal identifiers embedded in any query.

On the side of the contractual measures, they establish legal boundaries and responsibilities to the gatekeeper and the third-party online search engines by recognising them as independent data controllers in the sense of the GDPR. Google is responsible for technical anonymisation before sharing the search data and third parties are liable for all subsequent processing (para 35). Third parties are strictly prohibited from attempting to re-identify users, linking the search data with auxiliary datasets (which lowers the risk of composition attacks), or augmenting the data to reverse any applied anonymisation (para 38). Furthermore, the data can only be used for the particular purpose of optimising search service through improving ranking systems or auto-completion functionality (para 40).

To ensure the integrity of the Dataset, third parties must implement rigorous security and accountability protocols, such as the use of state-of-the-art encryption, robust access controls and maintaining detailed logs of all data access for at least one year. On top of that, the Implementation Measures mandate a continuous independent verification mechanism (paras 41-43). These additional mechanisms create legal and procedural safeguards that make intentional re-identification extremely high-risk for a third-party search engine.

FRAND pricing: rolling with the punches

Article 6(11) DMA constitutes a high ask on the part of the EU legislator. The obligation only applies to one designated gatekeeper occupying the near entirety of the market and the EC’s Implementation Measures assume that Google must roll with the punches if it has reached such a prominent position. This is particularly clear in the way in which the EC presents how pricing should work in the context of Article 6(11) DMA. The compensation that third parties can owe Google for its rendering access to its search data will only “reflect the incremental costs incurred by Google for the purpose of making such Search Data available plus a reasonable return, corresponding to a return on the capital employed for that purpose that shall not exceed Alphabet’s weighted average cost of capital” (para 71). Those incremental costs include fixed and variable costs relating to the preparation and formatting of the Dataset, the storage costs linked to making it available and the costs incurred in the process of electronically transmitting data to the eligible third parties and to the verification and identification of those same third parties (para 76). Google must also provide the basis for the calculation of the compensation in sufficient detail so that eligible third parties can decide whether they wish to engage in negotiations with it (para 83). Some third parties willing to request access, such as Ecosia, Brave Search, Startpage, You.Com or Mojeek might be particularly advantaged by the regime, since micro, small and medium-sized enterprises are exempted from paying more than the incremental costs incurred by the gatekeeper and a reasonable return on the capital employed for that purpose that shall not exceed Google’s weighted average cost of capital (para 74).

The EC’s proposal to base FRAND pricing in rendering access to search data in an incremental cost-based methodology can remind the most avid reader to the incremental value-based method used to determine FRAND licensing terms for Standard Essential Patents (SEPs). This approach calculates a royalty based on the value added by a specific patent over the next-best alternative available at the time the standard was adopted (ex ante), rather than the value added after the technology is locked into a standard (ex post). At the outset, both methodologies do not compare, nor they do account for the same underlying rationale. The goal of FRAND pricing in SEPs is to preserve investment incentives in the patented technologies, while fostering downstream competition in the implementation of those technologies.

The EC’s Implementation Measures does none of the above, since it approximates a price based on cost and not value. That is to say, the designated gatekeeper must assume that Article 6(11) DMA will be effective (and improve contestability in the market, aka harm its business in search) and it will only be compensated for the costs incurred in its materialisation in practice, and nothing more. Although the pricing structure might be fair for business users, it might be unreasonable to ask the designated gatekeeper to forego any further remuneration, especially bearing in mind that the Court of Justice recognised that the application of “Article 102 TFEU does not, however, preclude (the) undertaking from requiring an appropriate financial contribution from the undertaking which requested interoperability. Such contribution must be fair and proportionate (…), having regard to the actual cost of such development, to derive an appropriate benefit from it”.25

Given that incremental running costs are accounted for, one can but consider that non-amortised upfront fixed costs involved in the generation of the search data might also be legitimately included to some extent in the FRAND pricing formula proposed by the European Commission. Alternatively, Kalmus, Prasad and Salem set forth that search engines typically do not require business users to pay a fixed fee for access, but they do monetise the platform via the revenues they generate from selling ad spaces. Consequently, there may only be an implicit price for access, one that is dependent on the price paid for advertising slots and the degree of competition amongst the business users.26 If anything, the initial proposal based only on incremental costs is quite daring when one considers that FRAND pricing in SEPs is frequently based on more than one pricing benchmark because there is no single universally accepted standard for determining FRAND terms.

The process for the acquisition of the Search Dataset

The EC’s Implementation Measures design a multi-step process for acquiring the Search Dataset to ensure that third parties catering to online search engine functionality protect personal data to a similar extent as the gatekeeper. When Google engages in a one-on-one negotiation with any of these third parties, it will perform an eligibility assessment that will be solely based on the privacy assurances provided by them. Before gaining full access to the Dataset, the competitor to the gatekeeper must sign a licence agreement with Google and provide a Level 1 reasonable assurance report from an independent auditor, which confirms that their technical and organisational systems are designed to safely handle the data (paras 108-111). Once access is granted, the process moves forward into a phase where auditing and financial requirements are checked on an annual basis. Third parties must submit annual Level 2 assurance reports to demonstrate their data protection controls are effectively operating in practice. The designated gatekeeper can suspend or terminate access if a third party fails to provide these annual reports or if an audit reveals significant gaps in their privacy safeguards (paras 113-116).

The requirement for internationally recognised audit reports to be issued in favour of third parties providing online search engines can pose a significant financial hurdle for startups seeking to contest the gatekeeper’s position. For a small search engine, the cost of hiring an auditing firm to produce Level 1 and Level 2 reports may outweigh the benefits of the data. This can potentially make the Dataset only available to well-funded rivals.

To ensure that the Dataset is fit for purpose before the third parties enter into a legal commitment with the gatekeeper, Google must provide three test data samples with increasing levels of detail (Samples A, B and C). Sample A provides a small sample from the Search Dataset free of charge (paras 87 and 88), whereas Sample B is much larger in terms of scope and can be more or less tailored by the third party to test whether accessing the search data may serve the purpose pursued by Article 6(11) DMA (paras 89-94). Sample C provides a 5% of the final Search Dataset selected from queries occurring in no less than one month and no more than one year (para 95). Given that the gatekeeper will have to anonymise queries for Samples B and C, the EC’s Implementation Measures provide for the remuneration of these tasks, in a similar way to the FRAND pricing set out above.

Once access has been rendered, the principle of parity outlined in the case summary and in the Implementation Measures in passing might be set at a crossroads with the actual outcomes produced by the EC’s intervention. If Alphabet’s internal systems access and process data in real-time or multiple times per hour for its own ranking and auto-completion services, providing data only daily would violate the principle of parity in spirit, even if the Implementation Measures establish the daily sharing of data (para 20). In this same vein, the daily anonymisation pipeline entails that Google will apply the suppression procedures every day to records generated in the past 24 hours (para 24). This may create a structural delay where data is, by definition, up to 24 hours old before it even enters the Dataset. For high-velocity search categories like breaking news and viral trends, this means that third parties would receive the data after the peak interest has already passed. In particular, this effect might be amplified with the techniques used to ensure the Dataset’s utility, notably the allowlist updates and the length-based thresholding, given that both will be updated and re-computed every week. This means that even if data were to be delivered daily (which it would not), the anonymisation techniques used to decide which queries are shared are refreshed only once a week, so that new and trending terms might be potentially blocked to the extent that they may not have been whitelisted in the previous week.

Key takeways

Article 6(11) DMA places a general obligation on Google, as the only designated gatekeeper for online search engine services, to share ranking, query, click and view data with third-party undertakings providing online search engines on FRAND terms, whilst ensuring that any personal data is duly anonymised. Google’s initial compliance efforts fell short of what the EC considered effective enforcement of the provision. Its original implementation relied on frequency thresholding, which third parties argued rendered nearly all commercially valuable data unusable.

The EC’s Implementation Measures transform the mandate’s effectiveness in the following ways:

They interpret the scope of Article 6(11) DMA by extending access to the Search Dataset to AI chatbots that perform online search engine functionality under the P2B Regulation’s definition. Many AI chatbots may not meet the definition and sharing data with them risks powering incumbents in AI downstream markets rather than increasing contestability in the traditional search engine market.

Governed by a new principle of parity, the Implementation Measures compel Google to share data as comprehensively as it collects it for its own services, covering any query input on any access point, any modifications to that query, and granular interaction data, including the timing, order and duration of clicks, hovers, scrolls and swipes on search engine results pages.

They replace frequency thresholding with a more sophisticated five-step pipeline combining entity and length-based thresholding, metadata generalisation and mini-sessionisation. This new approach is better targeted, but its effect on dataset size remains empirically ambiguous.

They opt for an incremental cost-based methodology that limits Google’s compensation to the direct costs of preparing, storing and transmitting the Dataset, plus a reasonable return capped at its weighted average cost of capital. The EC’s approach is more ambitious than FRAND pricing precedents in the context of SEPs and it leaves unaddressed the broader question of whether non-amortised upfront costs of data generation should feature in the formula.

Before gaining full access to the Dataset, third-party online search engines must sign a licence agreement with Google and submit a report from an independent auditor confirming that their technical and organisational systems are designed to safely handle the data, with the submission of annual Level 2 reports being required as a condition to maintain their access to the Dataset. hereafter as a condition of maintaining access. The verification mechanism provides meaningful safeguards, but the cost of commissioning audit reports risks making the Dataset accessible only to larger players who already have a head start in the market.

Whether the Implementation Measures will deliver on a more contestable search engine market depends on the EC’s capacity to integrate stakeholder feedback into the final version of its implementing measures.

- 1Regulation (EU) 2022/1925 of the European Parliament and of the Council of 14 September 2022 on contestable and fair markets in the digital sector and amending Directives (EU) 2019/1937 and (EU) 2020/1828 (Digital Markets Act) [2022] OJ L 265/1.

- 2DOJ, ‘Department of Justice Wins Significant Remedies Against Google’ (Office of Public Affairs U.S. Department of Justice, 2 September 2025), available at https://www.justice.gov/opa/pr/department-justice-wins-significant-remedies-against-google.

- 3European Commission, ‘Commission opens proceedings to assist Google in complying with interoperability and online search data sharing obligations under the Digital Markets Act’ IP/26/202.

- 4Case DMA.100209 – SP – Alphabet – Article 6(11), Proposed Implementation Measures.

- 5Alphabet, ‘EU Digital Markets Act (EU DMA) Compliance Report Non-Confidential Summary’ (Google, 7 March 2024), in file with author.

- 6Alphabet, ‘EU Digital Markets Act (EU DMA) Compliance Report Non-Confidential Summary’ (Google, 7 March 2025), available at https://storage.googleapis.com/transparencyreport/report-downloads/pdf-report-bb_2024-3-7_2025-3-6_en_v1.pdf.

- 7Alphabet, ‘EU Digital Markets Act (EU DMA) Compliance Report Non-Confidential Summary’ (Google, 6 March 2026), available at https://storage.googleapis.com/transparencyreport/report-downloads/pdf-report-bb_2025-3-7_2026-3-6_en_v1.pdf.

- 8Google, ‘About the Google European Search Dataset Licensing Program’ (Google Search Central, 1 June 2024), available at https://web.archive.org/web/20240601200002/https:/developers.google.com/search/help/about-search-data-program.

- 9For a comment of the compliance workshop, see Alba Ribera Martínez, ‘Alphabet’s DMA Compliance Workshop – The Power of No: A Gargantuan Task Ahead and a Dual Role in Balancing Interests’ (Kluwer Competition Law Blog, 22 March 2024), available at https://legalblogs.wolterskluwer.com/competition-blog/alphabets-dma-compliance-workshop-the-power-of-no-a-gargantuan-task-ahead-and-a-dual-role-in-balancing-interests/.

- 10To watch the recording, see European Commission, ‘2nd DMA enforcement workshop: Alphabet – Update on first year of DMA compliance’ (European Commission, 1 July 2025), available at https://webcast.ec.europa.eu/2nd-dma-enforcement-workshop-alphabet-update-on-first-year-of-dma-compliance-2025-07-01; and for a comment on the workshop, see Alba Ribera Martínez, ‘Alphabet’s Second DMA Compliance Workshop: A Self-Reported Engaged Gatekeeper’ (Kluwer Competition Law Blog, 18 July 2025), available at https://legalblogs.wolterskluwer.com/competition-blog/alphabets-second-dma-compliance-workshop-a-self-reported-engaged-gatekeeper/.

- 11For some approximations, see Jasper van den Boom, ‘The Search for Meaningful Results: FRAND Access Conditions under Article 6(11) DMA’ (2025) 4 CoRe 302; and in the app store context, Kadambari Prasad and Jorge Padilla, ‘Taking Article 6(12) DMA Seriously: FRAND Access prices for App stores’ (2025), available at https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5261312.

- 12As set out by Xiaojin Zhang, Yahao Pang, Yan Kang, Wei Chen, Lixin Fan, Hai Jin and Qiang Yang, ‘No free lunch theorem for privacy-preserving LLM inference’ (2025) 341 Artificial Intelligence 104923; and Xiaojin Zhang, Yan Kang, Kai Chen, Lixin Fan and Qiang Yang, ‘Trading Off Privacy, Utility, and Efficiency in Federated Learning’ (2023) 14(6) ACM Transactions on Intelligent Systems and Technology 1.

- 13European Commission and European Data Protection Board, Joint Guidelines on the Interplay between the Digital Markets Act and the General Data Protection Regulation (2025). For a further comment on the draft guidelines, see Alba Ribera Martínez, ‘Great Expectations Placed on the Draft of the EDPB-EC’s Joint Guidelines on the Interplay Between the DMA and the GDPR’ (Kluwer Competition Law Blog, 13 October 2025), available at https://legalblogs.wolterskluwer.com/competition-blog/great-expectations-placed-on-the-draft-of-the-edpb-ecs-joint-guidelines-on-the-interplay-between-the-dma-and-the-gdpr/.

- 14As illustrated in Digital Applied Team, ‘Zero-Click Search Statistics 2026: Complete Data Guide’ (Digital Applied, 5 April 2026), available at https://www.digitalapplied.com/blog/zero-click-search-statistics-2026-complete-data#ai-search-engine-zero-click-data.

- 15Elizabeth Silliman, Kelsey Robinson, Julien Boudet, Desirae Oppong and Nilay Shah, ‘New front door to the internet: Winning in the age of AI search’ (McKinsey & Company, 16 October 2025), available at https://www.mckinsey.com/capabilities/growth-marketing-and-sales/our-insights/new-front-door-to-the-internet-winning-in-the-age-of-ai-search.

- 16As pointed out by Wondwesen Tafesse and Yoseph Mamo, ‘A comparison of conversational chatbots and the internet for consumer information search’ (2025) 45(2) Behaviour & Information Technology 314.

- 17Regulation (EU) 2019/1150 of the European Parliament and of the Council of 20 June 2019 on promoting fairness and transparency for business users of online intermediation services [2019] OJ L 186/57.

- 18Proposal for a Regulation of the European Parliament and of the Council amending Regulations (EU) 2016/679, (EU) 2018/1724, (EU) 2018/1725, (EU) 2023/2854 and Directives 2002/58/EC, (EU) 2022/2555 and (EU) 2022/2557 as regards the simplification of the digital legislative framework, and repealing Regulations (EU) 2018/1807, (EU) 2019/1150, (EU) 2022/868, and Directive (EU) 2019/1024 (Digital Omnibus), COM/2025/837 final.

- 19Maximilian Schaefer and Geza Sapi, ‘Data Network Effects: The Example of Internet Search’ (2023) 65 Information Economics and Policy 101063.

- 20For a broader analysis, see Alba Ribera Martínez, ‘Computational Presumptions Applied to AI Markets’ (2026) 6 Stanford Computational Antitrust 32.

- 21Case DMA.100209 – SP – Alphabet – Article 6(11), Case Summary.

- 22For instance, see Case DMA.100203 – Article 6(7) – Apple – iOS – SP – Features for Connected Physical Devices, Commission Implementing Decision of 19.3.2025 [2025] OJ C 4646. Commenting on them, see Alba Ribera Martínez, ‘Interoperability by Design or Denial? The Digital Markets Act’s Notion of Vertical Interoperability’ (2025), available at https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5121387.

- 23For evidence on the statement, see Ashwin Machanavajjhala, Daniel Kifer, Johannes Gehrke and Muthyramakrishnan Venkitasubramaniam, ‘ℓ-Diversity: Privacy Beyond k-Anonymity’ (2007) 1(1) ACM Transactions on Knowledge Discovery from Data (TKDD) 3.

- 24Srivatsava Ranjit Ganta, Shiva Prasad Kasiviswanathan and Adam Smith, ‘Composition attacks and auxiliary information in data privacy’ (2008) KDD ’08: Proceedings of the 14th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining 265.

- 25Case C-233/23, Alphabet and Others (Grand Chamber of the Court of Justice, 25 February 2025), para. 76.

- 26Ciara Kalmus, Kadambari Prasad and Tanja Salem, ‘What are Fair and Reasonable prices? Making a flexible concept tractable’ (Compass Lexecon, 6 June 2024), available at https://www.compasslexecon.com/insights/publications/what-are-fair-and-reasonable-prices-making-a-flexible-concept-tractable.

You may also like